General-purpose representation learning from words to sentences

- Felix Hill | University of Cambridge

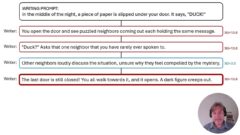

Real-valued vector representations of words (i.e. embeddings) that are trained on naturally occurring data by optimising general-purpose objectives are useful for a range of downstream language tasks. However, the picture is less clear for larger linguistic units such as phrases or sentences. Phrases and sentences typically encode the facts and propositions that constitute the ‘general knowledge’ missing from many NLP systems at present, so the potential benefit of making representation-learning work for these units is huge. I will present a systematic comparison of (both novel and existing) ways of inducing such representations with neural language models. The results demonstrate clear and interesting differences between the representations learned by different methods; in particular, more elaborate or computationally expensive methods are not necessarily best. I’ll also discuss a key challenge facing all research in unsupervised or representation learning for NLP – the lack of robust evaluations.

-

-

Ben Ryon

-

-

Watch Next

-

Beyond Swahili: Designing Inclusive AI for Bantu Languages

- Alfred Malengo Kondoro

-

-

-

-

-

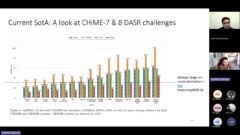

Evaluating the Cultural Relevance of AI Models and Products: Learnings on Maternal Health ASR, Data Augmentation and User Testing Methods

- Oche Ankeli,

- Ertony Bashil,

- Dhananjay Balakrishnan

-

-

-

Microsoft Research India - The lab culture

- P. Anandan,

- Indrani Medhi Thies,

- B. Ashok

-