Enhancing Input On and Above the Interactive Surface with Muscle Sensing

Current interactive surfaces provide little or no in-formation about which fingers are touching the surface, the amount of pressure exerted, or gestures that occur when not in contact with the surface. These limitations constrain the…

The Intern Experience at Microsoft Research Cambridge

Find out what it’s like to be an intern at the Microsoft Research lab in Cambridge, UK. Real interns talk about the projects they are working on, the culture of the lab and what it’s…

ClassSearch: A Classroom Environment for Teaching Web Search Skills

We explore the use of social learning — improving knowledge skills by observing peer behavior — in the domain of Web search skill acquisition, focusing specifically on co-located classroom scenarios. Through a series of interviews,…

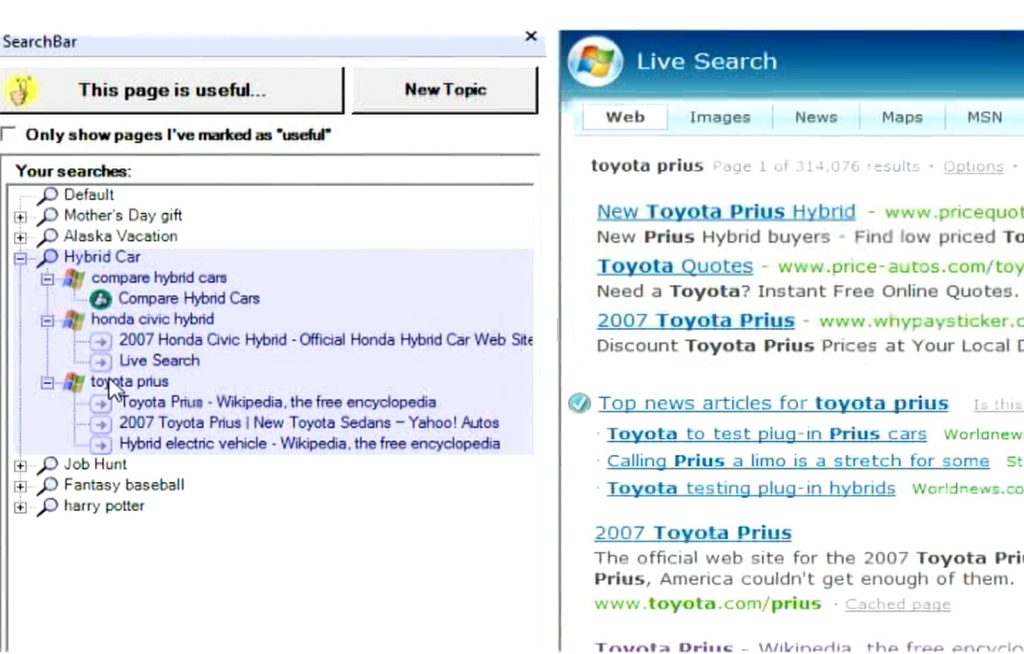

SearchBar: A Search-Centric Web History

SearchBar is a system for proactively and persistently storing query histories, browsing histories, and users’ notes and ratings in an interrelated fashion.

SuperBreak: Using Interactivity to Enhance Ergonomic Typing Breaks

Repetitive strain injuries and ergonomics concerns have become increasingly significant health issues as a growing number of individuals frequently use computers for long periods of time. Currently, limited software mechanisms exist for managing ergonomics; the…

Always-Available Mobile Interfaces

We have continually evolved computing to not only be more efficient, but also more accessible, more of the time (and place), and to more people. We have progressed from batch computing with punch cards, to…

AirWave: Non-Contact Haptic Feedback Using Air Vortex Rings

Input modalities such as speech and gesture allow users to interact with computers without holding or touching a physical device, thus enabling at-a-distance interaction. It remains an open problem, however, to incorporate haptic feedback into…

Muscle-Computer Interfaces (muCIs)

We explore the feasibility of muscle-computer input: an interaction methodology that directly senses and decodes human muscular activity rather than relying on physical device actuation or user actions that are externally visible or audible.