NIPS: Oral Session 8 – Chris J. Maddison

A* Sampling The problem of drawing samples from a discrete distribution can be converted into a discrete optimization problem. In this work, we show how sampling from a continuous distribution can be converted into an…

NIPS: Spotlight Session 4 – Deep Spotlights

J. Mairal, P. Koniusz, Z. Harchaoui, C. Schmid Convolutional Kernel Networks B. Zhou, A. Lapedriza, J. Xiao, A. Torralba, A. Oliva Learning Deep Features for Scene Recognition using Places Database W. Zaremba, K. Kurach, R.…

NIPS: Oral Session 4 – Jason Yosinski

How transferable are features in deep neural networks? Many deep neural networks trained on natural images exhibit a curious phenomenon in common: on the first layer they learn features similar to Gabor filters and color…

NIPS: Oral Session 4 – Ilya Sutskever

Sequence to Sequence Learning with Neural Networks Deep Neural Networks (DNNs) are powerful models that have achieved excellent performance on difficult learning tasks. Although DNNs work well whenever large labeled training sets are available, they…

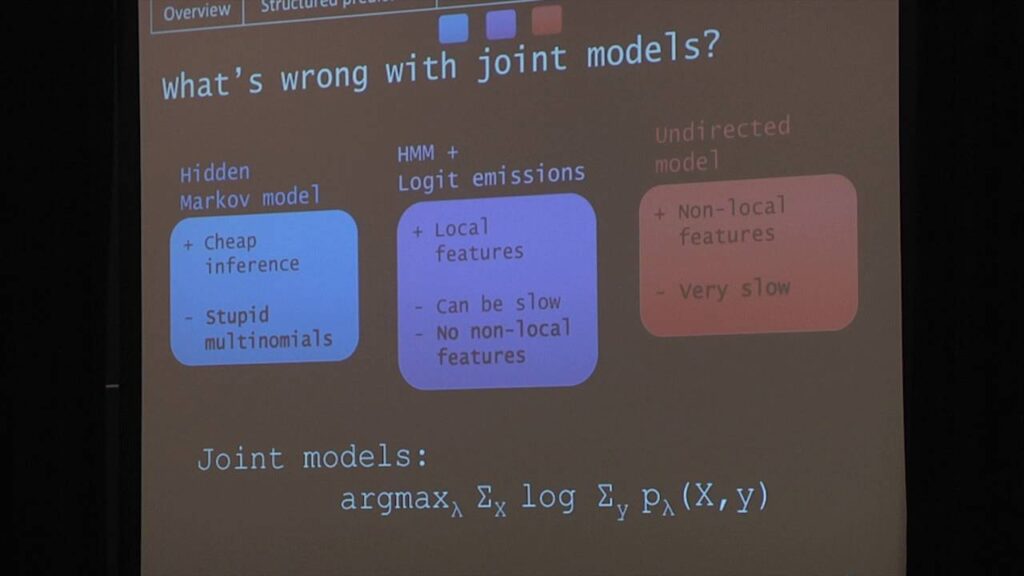

NIPS: Oral Session 4 – Waleed Ammar

Conditional Random Field Autoencoders for Unsupervised Structured Prediction We introduce a framework for unsupervised learning of structured predictors with overlapping, global features. Each input’s latent representation is predicted conditional on the observed data using a…

NIPS: Oral Session 1 – Deeparnab Chakrabarty

Provable Submodular Minimization using Wolfe’s Algorithm Owing to several applications in large scale learning and vision problems, fast submodular function minimization (SFM) has become a critical problem. Theoretically, unconstrained SFM can be performed in polynomial…

NIPS: Oral Session 3 – Dylan Festa

Analog Memories in a Balanced Rate-Based Network of E-I Neurons The persistent and graded activity often observed in cortical circuits is sometimes seen as a signature of autoassociative retrieval of memories stored earlier in synaptic…

NIPS: Oral Session 3 – Matthew Lawlor

Feedforward Learning of Mixture Models We develop a biologically-plausible learning rule that provably converges to the class means of general mixture models. This rule generalizes the classical BCM neural rule within a tensor framework, substantially…

NIPS: Oral Session 8 – Michael Schober

Probabilistic ODE Solvers with Runge-Kutta Means Runge-Kutta methods are the classic family of solvers for ordinary differential equations (ODEs), and the basis for the state of the art. Like most numerical methods, they return point…

NIPS: Spotlight Session 3 – Neuroscience and Neural Coding Spotlights

P. Putzky, F. Franzen, G. Bassetto, J. Macke A Bayesian model for identifying hierarchically organised states in neural population activity L. Buesing, T. Machado, J. Cunningham, L. Paninski Clustered factor analysis of multineuronal spike data…