Nouvelles et reportages

AsgardBench: A benchmark for visually grounded interactive planning

| Andrea Tupini, Lars Liden, Reuben Tan, Yu Wang, et Jianfeng Gao

Imagine a robot tasked with cleaning a kitchen. It needs to observe its environment, decide what to do, and adjust when things don't go as expected, for example, when the mug it was tasked to wash is already clean, or…

GroundedPlanBench: Spatially grounded long-horizon task planning for robot manipulation

| Sehun Jung, HyunJee Song, Dong-Hee Kim, Reuben Tan, Jianfeng Gao, Yong Jae Lee, et Donghyun Kim

Vision-language models (VLMs) use images and text to plan robot actions, but they still struggle to decide what actions to take and where to take them. Most systems split these decisions into two steps: a VLM generates a plan in…

PlugMem: Transforming raw agent interactions into reusable knowledge

| Ke Yang, Michel Galley, Chenglong Wang, Jianfeng Gao, Jiawei Han, et ChengXiang Zhai

It seems counterintuitive: giving AI agents more memory can make them less effective. As interaction logs accumulate, they grow large, fill with irrelevant content, and become increasingly difficult to use. More memory means that agents must search through larger volumes of…

Argos: Multimodal reinforcement learning with agentic verifier for AI agents

| Reuben Tan, Baolin Peng, Zhengyuan Yang, Oier Mees, et Jianfeng Gao

Argos improves multimodal RL by evaluating whether an agent’s reasoning aligns with what it observes over time. The approach reduces visual hallucinations and produces more reliable, data-efficient agents for real-world applications.

MindJourney enables AI to explore simulated 3D worlds to improve spatial interpretation

| Yuncong Yang, Reuben Tan, Swadheen Shukla, et Jianfeng Gao

MindJourney can enable AI to navigate and interpret 3D environments from limited visual input, potentially improving performance in navigation, planning, and safety-critical tasks.

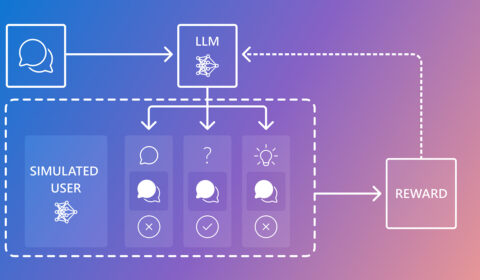

CollabLLM: Teaching LLMs to collaborate with users

| Shirley Wu, Michel Galley, Baolin Peng, Swadheen Shukla, et Jianfeng Gao

Recipient of an ICML 2025 Outstanding Paper Award, CollabLLM improves how LLMs collaborate with users, including knowing when to ask questions and how to adapt tone and communication style to different situations. This approach helps move AI toward more user-centric…

Research Focus: Week of April 21, 2025

In this issue: our CHI 2025 & ICLR 2025 contributions, plus research on causal reasoning & LLMs; countering LLM jailbreak attacks; and how people use AI vs. AI-alone. Also, SVP of Microsoft Health Jim Weinstein talks rural healthcare innovation.

Research Focus: Week of March 24, 2025

In this issue, we examine a new conversation segmentation method that delivers more coherent and personalized agent conversation, and we review efforts to improve MLLMs’ understanding of geologic maps. Check out the latest research and other updates.

Magma: A foundation model for multimodal AI agents across digital and physical worlds

| Swadheen Shukla, Jianwei Yang, Reuben Tan, Qianhui Wu, et Jianfeng Gao

Explore Magma, a foundation model that can empower AI assistants to interpret environments, plan actions, and execute tasks across digital and physical spaces. Now available, learn how it advances the field of agentic AI.